In the present digital era, information is omnipresent. The businesses gather information about customers, sales, websites, and numerous other sources. However, what happens when there are errors in this data? Poor information may result in poor decision-making, loss of cash, and dissatisfied clients. It is here that data validation tools come in handy.

Data validation tools are specific software packages which verify your data in order to ensure that it is correct, complete, and helpful. You will want to think of them as quality inspectors.

Your business could be relying on data validation tools, hence you will have confidence in the information you are working with daily. The tools will save time, minimize errors, and assist your team in making more intelligent decisions. With a small store or a big company, it is critical to have quality and clean data to achieve success. In this paper, we will discuss the top 10 tools to use in data validation today.

We will describe their purpose, necessity, and the ways to select the appropriate one for your business. Then, it is time to dive in and find out how these influential tools may change the manner in which you treat data.

What Are Data Validation Tools?

The data validation tools are computer programs that ensure your data is accurate and of good quality. They act as an intelligent assistant, which goes through all the information before it gets into your system.

These tools subject your data to some rules. As an example, they may ensure that:

- The names do not include numbers as well.

- There is a correct format for the email addresses.

- The values of age are in the realistic possible numbers.

- Mandatoryfields leftt blank.

- Dates are valid and in the correct format

Data validation tools process your data at various levels. They can check information when someone enters it into a form, when data is transferred between systems, or when they import massive files. Such constant verification of data would make sure that there is never any bad data in your valuable databases.

Benefits of Using Data Validation Tools

- Enhanced Data Accuracy: Data validation tools remove errors made by human beings during data entry. Each record has passed the criteria of quality and is sent into your system.

- Faster Processing Speed: Thousands of records can be automated in minutes. Your team is working on valuable work rather than working on manual reviews.

- Reduced Operational Costs: Early detection of mistakes eliminates the cost of correcting them later on in business operations. Reduction in the amount of work that you have to do implies that you will save money in your business.

- Better Reporting Quality: Clean data generates quality reports and valuable business data. Smart analytics lead to accurate strategic planning and decision-making.

- Increased Productivity: The data problems and errors require less time to fix in teams. The additional time is spent on valuable working time that develops the business.

Explore More: Best Product Based Companies in India

Top 10 Data Validation Tools

1. Talend Data Quality

Talend Data Quality is an all-purpose open source platform that assists the company in keeping data in all systems clean and correct. It is integrated effortlessly with the ETL suite of Talend, and is ideal to those companies that already have Talend products. The tool provides strong data profiling features that enable you to get acquainted with your data and then use the rules of validation.

It helps in real-time tracking and scrubbing, and keeping your data accurate as it traverses through your systems. The interface in Talend is also user-friendly, which facilitates cooperation between technicians, and business units to a great extent.

Key Features

- Real-time timeReal-timefiling capabilities.

- Individual rule validation creation.

- Frictionless connections to Talend Studio.

Pros

- Free and inexpensive.

- Good local support to be utilized.

- Superior ETL tool reporting.

Cons

- Steeper learning curve in the beginning.

- Technical knowledge setup is required.

- Lack of support for newcomers.

Best For: Enterprises

Pricing: Free (open-source) with paid enterprise options available

Website: https://www.talend.com

2. Informatica Data Quality

Data Quality Informatica Data Quality is a data quality management solution that is based on enterprise and is used by thousands of companies across the globe. It offers a full toolkit of data profiling, data cleansing, data matching and ongoing monitoring over hybrid environments.

The platform applies artificial intelligence to learn the patterns of the data and make improvements in quality automatically, saving on manual effort considerably. Informatica is very efficient in managing complicated data relationships, and it also offers full lineage trace to see how data flows within your systems.

Key Features

- Intelligent data discovery automation.

- End-to-end business rule validation.

- Full lineage tracking of data.

Pros

- Business level flexibility and performance.

- Very good ability to scale up.

- Extensive platform integration.

Cons

- Higher price point overall

- Complexiate initCoComplex.

- Needs consume resources of the administrator.

Best For: Corporations

Pricing: Custom pricing based on needs

Website: https://www.informatica.com

3. Datameer

Datameer helps to streamline the preparation of big data and its verification, namely in cases when organizations are operating in Snowflake services and with Hadoop. It gives data engineers and analysts the power to explore and clean data as well as verify data using an intuitive spreadsheet-like interface without requiring complex code.

The visual workflow builder allows making data validation processes easy to create, understand, and edit by anyone. Datameer is proficient in undertaking big scale data transformations and at the same time standards the quality of data in the process. The data validation projects can also work out the process together in real-time because of the characteristics of collaboration.

Key Features

- Data transformation interface without the use of code.

- In-house wiring of Snowflake.

- Shared interactive data discovery.

Pros

- Interfaces are extremely easy to navigate.

- Speedy implementation and deployment.

- Strong big data support

Cons

- Restricted to special platforms.

- Reduced advanced validation properties.

- Not ideal for complex rules

Best For: Analysts

Pricing: Contact for custom quotes

Website: https://www.datameer.com

4. QuerySurge

QuerySurge focuses on automating data testing and validation, which is a specific ETL process, so it is the solution of choice in the data warehouse team. It guarantees that data obtained from the source systems, and transformed into the target systems through business rules, contains absolute accuracy and complete integrity.

The smart comparison engine of the tool is able to identify even slight variations between the source and target data, and identify any errors, which the process of manual testing cannot help to identify. QuerySurge can be used as a part of continuous integration and continuous delivery (CI/CD) pipelines and is well-aligned with the current DevOps world.

Key Features

- Workflow Data testing with the help of automation ETL.

- Wisdom data comparison engine.

- Complete CI/CD interoperability.

Pros

- Developed with ETL validation in mind.

- Good regression test facilities.

- Detailed reporting and dashboards.

Cons

- Focused mainly on ETL

- Demand ETL process expertise.

- It can be expensive. It can’t carry

Best For: ETL

Pricing: Contact for pricing details

Website: https://www.querysurge.com

5. Great Expectations

The Great Expectations is an open-source, popular Python tool that scales software testing to data validation practices. It enables data teams to write unit tests on their data,a such that quality rules are being followed in data pipelines.

The tool helps in declarative validation tests that are simple to read and upkeep collaboration between data engineers and data scientists, which is smooth and comfortable. Great Expectations is already compatible with the most popular data tools such as Airflow, Jupyter notebooks, and other data warehouses.

Key Features

- Declarative data validation testing.

- Most commonly used data integrations.

- Automated documentation system.

Pros

- Free and open-source decision.

- Proactive support of the community of developers.

- Exquisite documentation and materials.

Cons

- Needs Python programming experience.

- Via manual setup and configuration.

- Poor choice of user interface.

Best For: Developers

Pricing: Free (open-source)

Website: https://greatexpectations.io

6. Datafold

Datafold is a proactive data quality monitoring tool that is specifically built to support modern data engineers and analytics teams within a cloud setting. Its Data Diff feature is unique automatically compares datasets in dissimilar settings to discover changes, regressions, or unforeseen changes.

This is extremely useful in the way you are developing it, and you need to tell that in the course of code changes, you have not broken previous data pipelines. Datafold is directly incorporated into the CI/CD workflow to avoid getting bad data to production systems. The tool offers column-level lineage tracking and assists the team in knowing the precise impacts of data transformations on downstream reports and analyses.

Key Features

- Automated data differing technology.

- Smooth-flowing CI/CD.

- Abilities of column-level tracing of lineage.

Pros

- Eliminates production data problems.

- Easy connectivity with workflows.

- Contemporary architarcarchitecturalof clouds.

Cons

- Relatively new in the market

- Limited to cloud platforms

- Less enterprise functions at present.

Best For: Engineers

Pricing: Contact for pricing information

Website: https://www.datafold.com

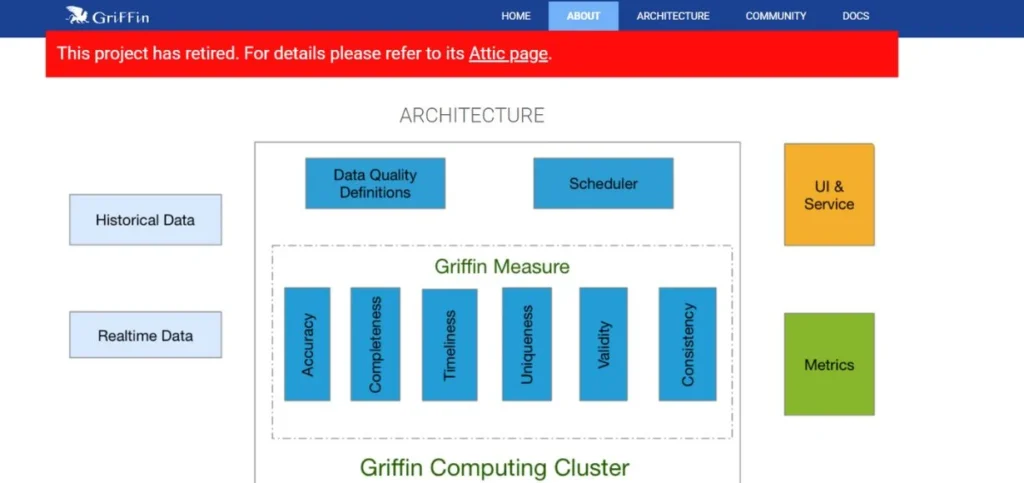

7. Apache Griffin

Apache Gridding is an open source data quality tool built by the Apache Software Foundation that will see that both batch and stream data are up to the business standard. It offers real-time evaluation of data quality, which applies to organizations that have to process continuous streams of data on IoT devices, clickstreams, or transaction systems.

The flexibility of the platform gives users a chance to specify different dimensions of data quality, such as accuracy, completeness, consistency, and timeliness. Apache Griffin is compatible with bigger data environments such as Hadoop, Spark, and Kafka, which makes it perfect to be used in large-scale environments.

Key Features

- Real-time and batch validation.

- Custom quality checks that are based on rules.

- Scalable big data platform

Pros

- Free open-source solution

- Handles streaming data well

- Strong big data integration

Cons

- Requires big data expertise

- Cumbersome setup and configuration.

- There is a lack of commercial support.

Best For: Big Data

Pricing: Free (open-source)

Website: https://griffin.apache.org

8. Ataccama ONE

Ataccama ONE is a platform that integrates data profiling, validation, and governance into a single platform that is driven by artificial intelligence. It assists organizations in the definition of business rules, the detection of anomalies in data, and provides consistency within a complex, multi-source data environment.

The rule generation feature, powered by AI will automatically provide you with validation rules depending on the data habits of your data, which saves set-up time to a great extent. Ataccama ONE offers in-depth data quality dashboards enabling stakeholders to have the platform to view data health in the organization in real time.

Key Features

- Rule generation technology with AI assistance.

- Integrated quality and governance.

- Scalability on an enterprise-wide basis.

Pros

- Full-fledged unified solution.

- Competent AI automation capabilities.

- A good governance framework is involved.

Cons

- Enterprise premium pricing.

- A bigger implementation schedule is needed.

- Heavy investment in training is required.

Best For: Governance

Pricing: Contact for enterprise pricing

Website: https://www.ataccama.com

Also Read: Top Data Management Platforms

9. Soda Core

Soda Core is a current open-source project in data validation that implements data validation techniques to check the quality of data from SQL-accessible data sources. It enables data teams to write simple YAML-based checks that authenticate data at the point of rest, but do not relocate data. The tool can be easily integrated with such data orchestration systems as Airflow, Dagster, and Prefect, and it can be easily inserted into existing data pipelines.

Soda Core is compatible with the automated data validation of several different databases, such as Snowflake, BigQuery, Redshift, and PostgreSQL. It has a lightweight design, thus incurring low overhead on your systems, and also offers powerful validation features. The tool gives explicit and action-oriented warnings in case data quality problems are found so that they can be resolved in n short time.

Key Features

- Simple validation in YAML.

- SQL support for the data source.

- Light pipeline integration design.

Pros

- Easy to learn and use

- Free open-source tool

- Contemporary cloud-friendly architecture.

Cons

- Limited to SQL sources

- Less availability of advanced features.

- Expanding yet smaller community.

Best For: Pipelines

Pricing: Free (open-source) with paid cloud version

Website: https://www.soda.io

10. Datagaps ETL Validator

Datagaps ETL Validator is specifically built to be used in automated ETL testing to validate data within data warehouses, business intelligence reports, and APIs as well. It offers end-to-end regression testing features that can guarantee that ETL pipelines can work consistently despite changes in source systems and business rules, as time progresses.

A simple visual test case builder enables one to develop intricate validation situations without having much knowledge of coding. Datagaps aids in legitimizing information involving numerous traces and places, including conventional databases and present cloud data foundations. It is effective in billions of records that require its potent comparison algorithms, thus suitable for the data validation of the enterprise scale.

Key Features

- End-to-end ETL BI testing

- API and database validation

- Visual test case builder

Pros

- Extensive testing capacity.

- Scaling performance.

- Sound reporting and analytics.

Cons

- Focused primarily on ETL

- higher learning curve in the first place.

- Enterprise pricing model exclusively.

Best For: Testing

Pricing: Contact for pricing quotes

Website: https://www.datagaps.com

How to Choose the Data Validation Tools

- Evaluate Your Data Environment: First, create awareness of which data you have, how much of it, and of what complexity. Select the tools according to your particular infrastructure and technology stack.

- Test convenience: Find easy-to-use interfaces that can be used by the team. Complex tools need more training and investment of resources.

- Check Integration Capabilities: Make sure the tool is integrated with your current data systems. Fluid integration not only decreases implementation time and technical hardship, but it also diminishes interoperability problems considerably.

- Take into Account Scalability Requirements: Select tools that can cope with your business data. Scalable solutions will avoid expensive migrations and replacement of systems in the future.

- Review Pricing Model: Comparison of costs with features and lifecycle business value. Look at the initial cost of investment as well as the maintenance costs.

Conclusion

Any business that assumes the use of accurate information to make decisions must have the Tata validation tools. In this paper, we have discussed the top ten data validation tools on the market today, and each one of them has distinct capabilities to assist you in maintaining clean and reliable data.

It is imperative to keep in mind that the selection of applicable data validation tools is conditioned by the needs, the level of your experience, finances, and the data landscape. You should take time to do an analysis of various alternatives, use the free trial when it exists, and engage your team in the process.

Since data is increasingly becoming more holistic and significant in size and magnitude, the use of efficient data validation instruments is no longer a luxury. Begin your quest to achieve a better quality of data today, and take any of these formidable tools and change the way your business processes information.

FAQs

1. What are data validation tools used for?

Data validation, Data accuracy, Data completeness, and Data consistency Data accuracy, Data completeness, and Data consistency are automatically verified. They make sure that no one enters your systems without observing rules, and thus there are no errors that may result in an error in your system, and consequently,l y enhance data quality significantly.

2. Are the data validation tools not costly?

There are low-end free open-source solutions to enterprise systems. Numerous data validation tools have flexible pricing applications, such as free trials, so any business can use them.

3. Are there data validation tools that are used by small businesses?

Absolutely! A high number of data validation methods increases easy interfaces for small companies. Free alternatives such as Great Expectations and Soda Core are capable of doing robust validation and do not require huge budgets.

4Whatch are the positive impacts of data validation tools on data quality?

The data validation tools automatically test data, flagging any errors immediately. They help to keep bad data out of the systems and save on time spent on manual work, and also give you uniform and reliable data in all parts of your organization.

5. Do I need coding skills to use data validation tools?

Not always! Other tools might need programming experience, but several data validation tools in recent times have visual interfaces and low-code solutions. Datameer and other tools, such as Informatica, make it easy to write validation rules through business people.

Continue Exploring: Best Social Media Marketing Companies in Mumbai